New research shows how drones can maintain gigabit connections while flying at high speed

Drones are getting better at flying fast. Now they’re learning how to stay connected while doing it.

Researchers have developed an artificial intelligence system that allows drones to maintain ultra-fast wireless links even as they move rapidly through complex environments. The advance, described in a recent paper published in ETRI Journal, could transform emergency response, drone delivery, and future 6G networks, where losing a signal for even a moment can mean losing control.

The work tackles one of the hardest problems in modern wireless communication: how to keep a millimeter-wave signal locked onto a fast-moving target.

Why High-Speed Wireless Fails in the Air

Millimeter-wave wireless delivers enormous data rates, often multiple gigabits per second. These frequencies are a cornerstone of future 6G systems, as outlined in broader visions for next-generation networks published in IEEE Access.

But there’s a catch.

Unlike Wi-Fi or traditional cellular signals that spread widely, millimeter-wave transmissions behave like narrow beams. They must be aimed with extreme precision. Even small misalignments can break the connection entirely, a challenge well documented in surveys of millimeter-wave UAV communications published in IEEE Communications Surveys & Tutorials.

For drones, this is especially difficult. They move in three dimensions, accelerate quickly, and operate in environments filled with buildings, terrain, and obstacles.

Traditional beam-tracking systems try to react by constantly measuring signal strength and adjusting the beam direction. According to the ETRI Journal study, this reactive approach creates too much delay in dynamic aerial scenarios. By the time the system reacts, the drone has already moved.

Predicting Motion Instead of Chasing Signals

The new approach flips the problem around.

Instead of reacting to signal loss, the AI predicts where the drone will be next.

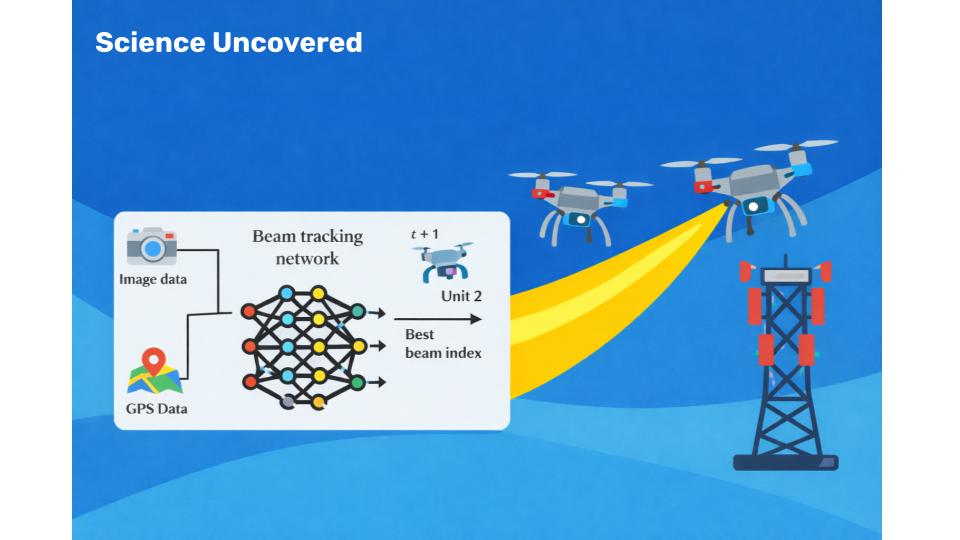

The system fuses two data sources drones already carry onboard: cameras and GPS. Camera images capture environmental context and obstacles ahead. GPS provides absolute position and recent motion history. Together, they allow the AI to anticipate future movement rather than infer it from fading signals.

This idea builds on earlier research presented at IEEE GLOBECOM, which explored position- and camera-aided beam prediction for drone communications, but extends it with deeper multimodal learning and real-world validation.

Tested in the Real World

To evaluate the system, the researchers used the DeepSense 6G dataset, a large-scale real-world collection of drone flights paired with millimeter-wave measurements.

Unlike simulations, DeepSense 6G captures messy reality: sudden turns, changing speeds, urban canyons, open spaces, and unpredictable flight paths.

The multimodal AI system improved beam prediction accuracy by more than 24 percent compared to GPS-only approaches. It also significantly outperformed beam-tracking methods originally designed for ground vehicles, such as those described in IEEE Transactions on Vehicular Technology.

In practical terms, the drone maintained high-quality connections more consistently, with fewer dropped packets and less time spent searching for the correct beam direction.

Why This Matters Right Now

Reliable drone connectivity is no longer optional.

Emergency responders increasingly rely on drones for live video during disasters and search-and-rescue missions. Delivery companies are expanding drone trials over populated areas, where stable command-and-control links are critical. Utilities use drones to inspect power lines, pipelines, and towers, reducing risk to human workers.

At the same time, future wireless networks are moving toward even higher frequencies, including millimeter-wave and terahertz bands, as explored in recent Nature Photonics research on next-generation antenna technologies.

The same beam-tracking problems drones face today will soon affect autonomous vehicles, robots, and mobile devices.

Smarter Through Sensor Fusion

GPS alone knows where a drone is, but not what lies ahead. Cameras see obstacles and openings, but lack absolute position.

The strength of this system lies in combining both.

A fusion layer allows visual and positional data to inform each other. When GPS data shows the drone approaching a specific location, the camera can flag buildings or terrain in the path. When the camera detects open sky, GPS confirms the trajectory is clear.

This shared context allows proactive beam steering rather than last-second correction.

Built for Lightweight Drones

Importantly, the system avoids heavy sensors like LiDAR or radar, which add weight and drain power.

Cameras and GPS receivers are already standard on most drones. The innovation comes from extracting more intelligence from existing hardware, not adding new components.

That design choice makes real-world deployment far more practical.

What Still Needs Work

Challenges remain before commercial adoption.

The AI must run in real time on small onboard processors, requiring further optimization. The model also needs to generalize across new environments, weather conditions, and drone platforms, challenges highlighted in prior UAV beam-tracking research.

Still, the core concept has been proven.

The Bigger Shift

This research reflects a broader change in wireless engineering.

Instead of reacting to signal statistics, systems are beginning to observe the physical world directly and predict what will happen next. Movement, environment, and intent become first-class inputs.

As wireless systems grow faster and operate at higher frequencies, this shift from reactive control to predictive intelligence may be unavoidable.

The Takeaway

By combining camera vision and GPS data, researchers have shown that AI can help drones maintain high-speed wireless connections by predicting motion rather than chasing lost signals.

It’s a step toward more reliable drones, smarter networks, and a future where gigabit connectivity follows moving devices without interruption.

In the era of autonomous systems and 6G wireless, prediction isn’t just useful. It’s essential.

References

1. Deep Learning-Based Multimodal Beam Tracking for Unmanned Aerial Vehicle Communication Yeo, J. et al. (2026). ETRI Journal

2. 6G and Beyond: The Future of Wireless Communications Systems Akyildiz, I. F., Kak, A., & Nie, S. (2020). IEEE Access

3. A Survey on Millimeter-Wave Beamforming Enabled UAV Communications and Networking IEEE Communications Surveys & Tutorials

4. Position and Camera Aided Beam Prediction for Real-World 6G Drone Communication Charan, G. et al. (2022). IEEE Global Communications Conference (GLOBECOM)

5. DeepSense 6G Dataset: A Large-Scale Real-World Multimodal Sensing and Communication Dataset Alkhateeb, A. et al. University of Arizona, 6G@UT

6. KF-LSTM Based Beam Tracking for UAV-Assisted Millimeter-Wave Wireless Networks Yan, L. et al. (2022). IEEE Transactions on Vehicular Technology

7. On-Chip Topological Leaky-Wave Antenna for Full-Space Terahertz Wireless Connectivity Wang, W. et al. (2026). Nature Photonics

Ray Jackson holds a BSc in Electrical Engineering from the University of Manitoba and a PhD in Physics from Carleton University. His reporting interests include Current and Future Technologies, Engineering and Artificial Intelligence.