A neural network from researchers at Princeton and Macquarie University has figured out how to share a single antenna beam between radar and communications, without being shown a single correct answer

There is a problem sitting at the heart of 6G wireless technology that mathematicians have not been able to fully solve.

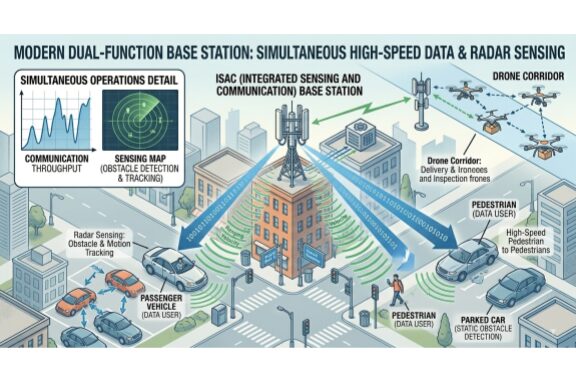

When a base station needs to simultaneously track objects in its environment and send data to nearby devices, it has to do both jobs with the same antenna, at the same time, using the same slice of radio spectrum. Give more resources to communications and sensing suffers. Give more to sensing and your data speeds drop. Deriving a formula that finds the perfect balance, under real-world conditions, has proven to be mathematically intractable.

Now a team of researchers from the University of Moratuwa in Sri Lanka, Macquarie University in Australia, Harbin Institute of Technology in China, and Princeton University in the United States think a neural network may have cracked it, by teaching itself, without being told the answers.

Their paper, published in the journal Entropy in February 2026, introduces a system called BeamNet.

The Problem With Sharing One Antenna

Modern wireless systems increasingly need to do two things at once. A base station on a smart city street corner might need to send high-speed data to the cars and pedestrians around it while simultaneously acting as a radar, detecting obstacles and tracking movement. Future self-driving car networks and drone corridors will depend on exactly this kind of setup.

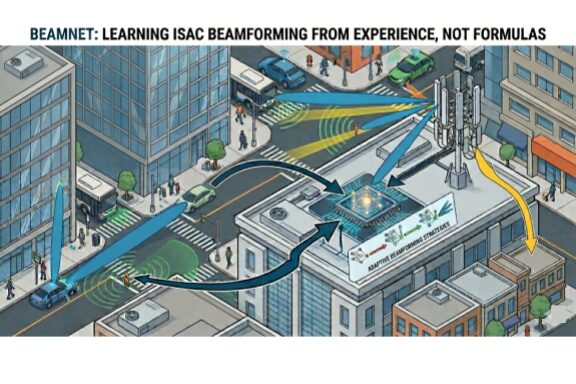

The technical term for it is Integrated Sensing and Communication, or ISAC. The key challenge inside ISAC is beamforming: deciding how to shape and aim the antenna’s signal to serve both tasks at once. Think of it like a spotlight operator at a concert who has to illuminate both the lead singer and the drummer simultaneously with a single beam of light. There is an inherent tradeoff, and finding the sweet spot is the whole game.

For years, engineers have tried to solve this with mathematics, deriving formulas that calculate the optimal beam direction for any given channel condition. Those formulas work well in theory, but they come with a critical assumption: that the base station knows the wireless channel perfectly. In reality, it never does. Channel estimates are always noisy. Buildings move, rain falls, vehicles pass through. The neat mathematical solution starts to break down as soon as the estimate has any error in it. That is the gap that no equation has been able to cleanly close.

What BeamNet Does Differently

BeamNet approaches the problem from a completely different direction. Instead of deriving a formula, it trains a neural network to learn the solution directly from experience.

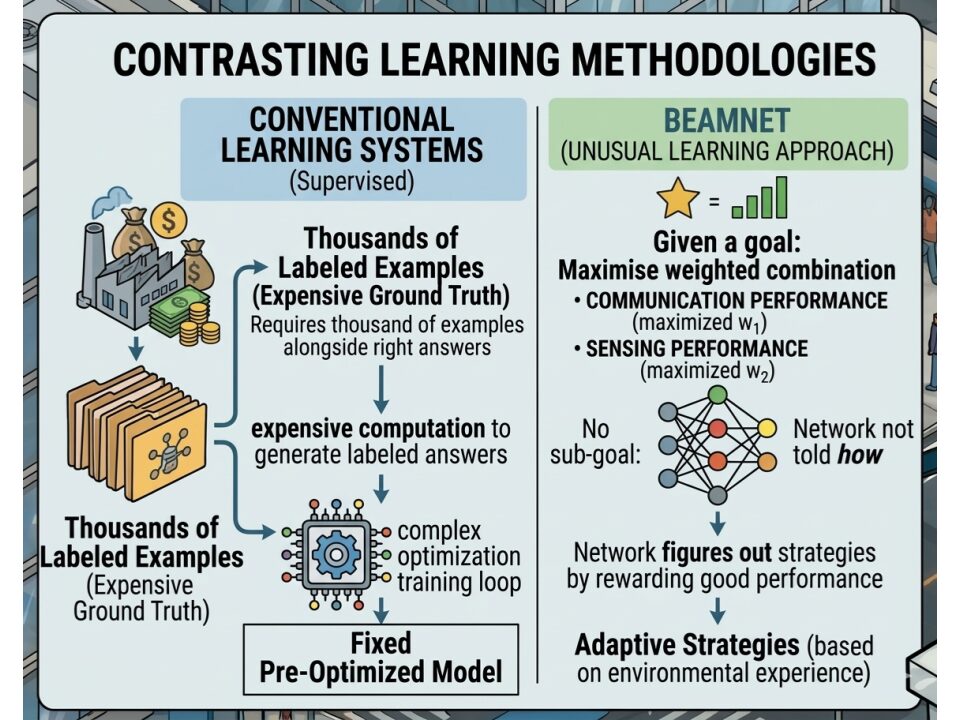

What makes it unusual is that it learns without being shown correct answers. In most machine learning systems, you train the model by showing it thousands of examples alongside the right answer for each one. Generating those labelled answers requires either expensive computation or embedding a whole optimisation solver inside the training loop.

BeamNet skips all of that. Instead, it is given a simple goal: maximise a weighted combination of how well the system is communicating and how well it is sensing. The network is not told how to achieve that goal. It figures out the beamforming strategy on its own, purely by being rewarded for doing both jobs well. The physics of the problem become the teacher.

“Rather than relying on closed-form designs that assume perfect channel knowledge and specific fading laws, a deep learning-based approach can learn the mapping from observed channel realisations to beamforming vectors directly from data,” the authors wrote in a statement.

How Well Does It Actually Work?

The team tested BeamNet under three scenarios of increasing difficulty.

Under ideal conditions, where the channel is perfectly known, BeamNet reproduced the mathematically proven optimal solution. That is a sanity check, confirming the approach is sound.

Under more complex channel models where no closed-form solution exists, BeamNet was still able to map out the performance frontier, giving engineers a tool to understand the limits of their system even when the maths cannot provide an answer.

The most important result came under imperfect channel conditions, the scenario that actually exists in every real deployment. When the researchers took the best known analytical beamformer and applied it to a noisy, imperfect channel estimate, its performance degraded noticeably. BeamNet, trained specifically on noisy estimates, degraded far less. It had learned, without being told, to be robust to the kind of estimation error that real wireless systems always face.

Why This Could Matter Beyond the Lab

One of BeamNet’s most practically useful properties is that it does not need to know what kind of wireless environment it is operating in. A base station in a dense city, on an open highway, or inside a factory all experience different patterns of signal fading. Most mathematical solutions are designed for one specific pattern and perform poorly when conditions differ from what they assumed.

BeamNet’s training objective contains no such assumption. The same trained model generalises across different environments, which matters enormously if this approach is ever going to be deployed at scale across a real network, where no two locations are quite the same.

What Comes Next

The paper is a simulation study, so no real antenna hardware was involved. The system was also evaluated in a simplified scenario: one antenna array, one communications user, one sensing target. Real-world ISAC deployments will involve many users, many targets, and far more complex interactions between them.

With 6G standardisation accelerating and the low-altitude economy demanding real-time sensing from the same networks that carry data, the pressure to solve the sensing and communications tradeoff cleanly and practically is only going to intensify. BeamNet suggests that the solution may not come from a better equation. It may come from a network that taught itself the answer.

Sources

Quotes in this article are drawn from a paper published in Entropy (MDPI) by Nimnaka, Gayan, Zhang, Inaltekin, and Poor, accepted 27 January 2026, DOI: 10.3390/e28020175. The paper is open access and available in full at pmc.ncbi.nlm.nih.gov/articles/PMC12939161.

More Like This

- New System Uses Smart Surfaces and Room Reflections to Boost Future 6G Signals

- Engineers crack 6G’s biggest indoor problem with a device that looks like a clothespin

- Your City’s Cell Towers Could Soon Track Every Drone in the Sky

Ray Jackson holds a BSc in Electrical Engineering from the University of Manitoba and a PhD in Physics from Carleton University. His reporting interests include Current and Future Technologies, Engineering and Artificial Intelligence.